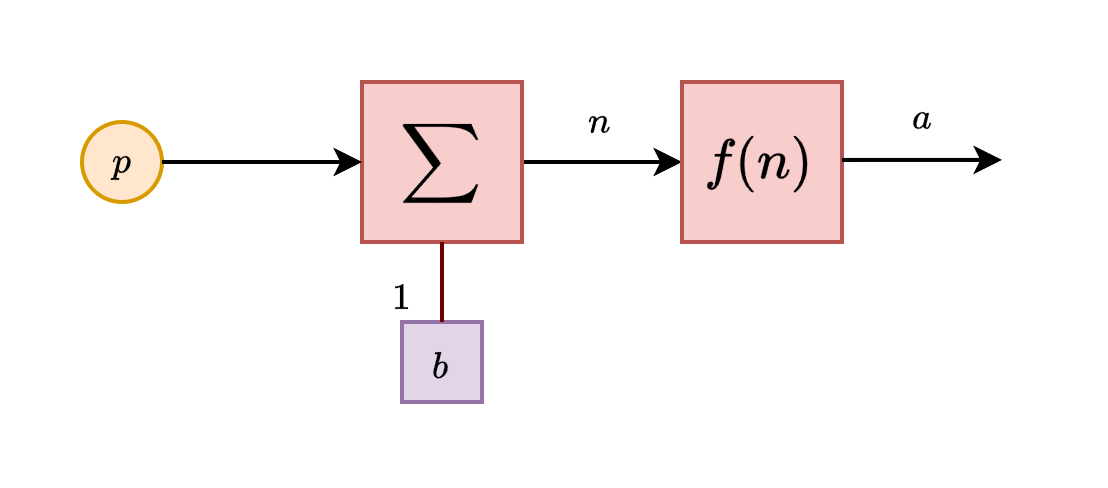

Basic neuron schema

The neuron model is fairly simple. We develop it, much to the fortunate simplified model of neuron, into specific units, which is now then called simply as neuron. We will also illustrate the main building blocks, its functions and operation of ranges, and organization protocol possible of such 'neural networks'. More complex architecture introduced after this section will still base on this chapter for its basic operational modules.

Single-input neuron

A single-input neuron receives only one input channel, and hence outputs only one. Suppose that we have the input into the neuron, then the input itself is controlled by the receiver of the neuron by a linear modifier, . The here is called the bias, which shift the signal/value in, while the is the weight, which control the relative strength of the input. We assume here that the numerical encoding and operation is on the field .

This input after processing would then be fed into a function, usually called in literature as activation function, transfer function, and so on. Its role is, either simply introducing nonlinear behaviours, or, to gauge and create certain interpretation of the data for the specific neuron. What we mean by this will be clarified in further sections. Hence, the full inner working of the model can be seen as for an arbitrary function , since we restrict it to singular input. Below is the illustration of such neuron unit.

So in total, the output of this neuron is . The actual output depends on the particular transfer function that is chosen for it. So far, it is the only rigid part of the entire neuron. Hence, we also call this type a static neuron, especially when the interpreter of the neuron input is fixed - usually the designer will tailor it to specific purpose. Some transfer function will work better than others in specific cases, and so on. Activation function hence can contain or take advantage of, for numerical encoding, specific geometric properties for example of the observable landscape, and so on.

Transfer function

Now, let us discuss more about the transfer function. So far, this is the most important aspect of a typical neuron. Here, the transfer function can be linear or nonlinear function, in which it is used to transform the input-output characteristic of a single-input neuron into the variety of the transfer function.

Hard limit ()

The hard limit transfer function, used in distinctive categorical sorting, has its input-output characteristic as:

If we allow modification of the inhibitory value, that is, the zero, by putting it to another variable , the function turns into the dynamic hard limit function,

Actually, you can even give the function the value range of absolute jump to be more than , though fundamentally, according to the logical design, it is not interpretable to anything substantial.

Symmetric hard limit ()

This one is a variation of , in which the discrete binary channel is instead. Hard limit is more fitting for probability of logic setting, while symmetric hard limit is more favourable in certain specification, for example, for fuzzy logical domain or directed value functions. Coincidentally, this is also the range that certain sigmoidal variation takes place. Symmetric hard limit is then defined by

Linear family ()

There are many ways to structure the linear input-output processing node. Usually, we will have the pure linear channel , the saturating linear channel , and the symmetric variation of the saturating linear channel \texttt{satlins}. Because they belong to the same family. The linear one is simple. For saturating linear, we have its signal inhibited toward the two ends: A generalization of this is taken in the form of a functional enclosed within this range. That is, which might lead to undesired behaviours or simply non-continuous values, but we will have to resolve that later on. If ever. And finally, the symmetric version of the saturating linear functional,

which will also have the same generalized form.

Sigmoid () and log-sigmoid ()

The sigmoid function is fairly simple. Instead of giving piecewise saturating condition, we find the expression that gives pairwise, two-sided continuously saturated function, expressed by:

A fairly complicated and often reductive version of it is the log-sigmoid function, as Interestingly, the differentiation operator on log-sigmoid gives the sigmoid function, while sigmoid's differentiation gives .

Hyperbolic tangent ()

The hyperbolic tangent the adoption of the hyperbolic function to be transfer function. As such, its range also lies in , making it on par with variations of symmetric saturation function. Normally, we would regard this as the somewhat narrow (by width) symmetric version of sigmoid. It is formulated as: Also interestingly, hyperbolic tangent is self-referential, evidentual of the derivative: The uses of those function can be interpreted to be quite similar to how we can formulate the binary classification, or binary categorization problem-solving solution.

Activation function is one of the most important part of a particular neuron. While if we fix the procesing input unit, activation function grants us the ability to encode particular interpretation that the neuron would have to operate upon - for example, the sigmoidal mode is actively and practically a polar binary comparison - supposedly in range , and the curve is also bimodal. Hence, designing network around such is one of the very important aspect of the neuron structure. Though, what would happen if you let a lot of neurons working in place?