A bit on algorithmic complexity

It’s been a while since I started learning theoretical computer science, and I think perhaps, I should do a bit of refactoring, and looking back the basic. One of such basic is the one that I pretty much struggled back in the day, very, that is asymptotic analysis.

Concept

Algorithms Runtime Analysis

We want to measure runtime as a function of size of input. Note that this does not depend on machine, operating system or language. Size of input is usually number of bits needed to encode the input instance, can be length of an array.

There is an underlying assumption that all elementary operation takes a constant amount of time. ## Best/Worst/Average case For a fixed input size there could be different runtime depending on the actual instance. We are generally interested in the worst case behaviour.

Let \(T(I)\) be time, algorithm takes on an instance \(I\). The best case running time is defined to be the minimum value of \(T(I)\) over all instances of the same size \(n\).

Hence, 1. Best case:

\[t_{best}(n)=\mathrm{MIN}_{I:\lvert I \rvert =n}\{ T(I) \}\]

- Worst case:

\[t_{best}(n)=\mathrm{MAX}_{I:\lvert I \rvert =n}\{ T(I) \}\]

- Average case:

\[t_{best}(n)=\mathrm{AVERAGE}_{I:\lvert I \rvert =n}\{ T(I) \}\]

Asymptotic Analysis of functions

Asymptotic analysis consider growth of functions on large inputs.

The asymptotic behavior of \(f(n)\) refers to the growth of \(f\) as \(n\) gets large. Asymptotic analysis of function enables us to compare functions.

Upper bound - Big \(O\) notation

Big \(O\) notation

A function \(g(n)\in O(f(n))\) if there exists constant \(c>0\) and \(n_{0}\geq 0\) such that \[g(n)\leq cf(n)\quad \forall n\geq n_{0}\] This can be interpreted as the expansion of the definition of \(a\leq b\) to functions.

This says that \(g(n)\) is kind of less than \(f(n)\) for all values of the domain bigger than the threshold \(n_{0}\), to a certain ratio.

Various way to refer to this are \(g(n)\) is asymptotically dominated by \(f(n)\). Here we then define \(f(n)\) as the asymptotic upper bound on \(g(n)\). By the abuse of notation law (which should, and should not exist), we denote

\[g(n)=O(f(n))\]

for convenience. ## Rule for function simplification Some rules that help simplify functions by omitting dominated terms and ignoring coefficients.

- \(n^{a}\) dominates \(n^{b}\) if \(a>b\).

- Multiplicative constants can be omitted.

- Any exponential dominates any polynomial.

- Any polynomial dominates any logarithm.

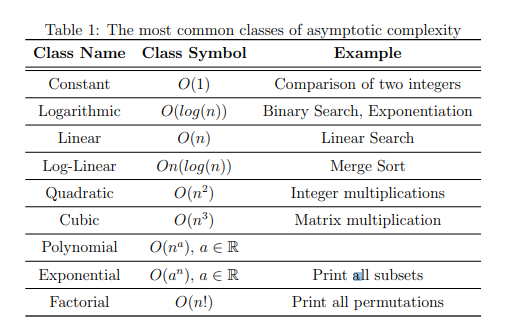

Asymptotic-Complexity Classes

There are complexity classes of function that usually occur in algorithm analysis.

The function grow faster from right to left, i.e.

The function grow faster from right to left, i.e.

\[n!\gg 2^{n}\gg n^{3}\gg n^{2}\gg n\log{(n)}\gg n\gg \log{(n)}\gg 1\]

Here \(a\gg b\) means that \(a\) is much larger than \(b\). The case \(O(1)\) is the best possible case in this consideration.

Asymptotic Lower Bounds - Big \(\Omega\) Notation

Big \(\Omega\) Notation

A function \(g(n)\in \Omega(f(n))\) if there exists constant \(c>0\) and \(n_{0}\geq 0\) such that \[g(n)\geq cf(n)\quad \forall n\geq n_{0}\]

We write this as \(g(n)\in \Omega(f(n))\). \(g\) in this case as being asymptotically lower bounded by \(f\). By this, we also have the reverse condition:

\[g(n)\in O(f(n))\iff f(n)\in \Omega(g(n))\]

Asymptotic Tight Bounds - Big \(\Theta\) Notation

We have the following definition:

Big \(\Theta\) Notation

A function \(g(n)\) is \(\Theta(n)\) iff there exists two positive real constants \(c_{1},c_{2}\) and \(n_{0}\in\mathbb{Z}^{+}\) such that

\[c_{1}f(n)\leq g(n)\leq c_{2}f(n)\]

For all \(n>n_{0}\), and \(n_{0}=max[n_{1},n_{2}]\).

This effectively puts a tight bound including the lower limit of the central tendency. The notation for this can also be \(f \asymp g\), such that \[\Theta(g) = \{ f: \mathbb{N}\to \mathbb{R}\mid f\asymp g \}\] is the set of functions that have the same order of growth as \(g\). If \(f\in\Theta(g)\) or \(f \asymp g\), we say that \(g\) is an asymptotically tight bound for \(f\).

Proposition on \(\Theta(g)\)

Let \(f\) and \(g\) be functions from \(\mathbb{N}\to \mathbb{R}\). If \(g\) is a positive function and the limit

\[d=\lim_{ n \to \infty } \frac{|f(n)|}{|g(n)|}\]

exists and is a nonzero real number \(d\), then \(f\in\Theta(g)\).

Proof

It follows from the definition of the limit that for each \(\epsilon>0\), there exists a natural number \(n_{\epsilon}\) such that

\[d-\epsilon \leq \frac{|f(n)|}{|g(n)|} \leq d+\epsilon\]

for all \(n\geq n_{\epsilon}\). In other words, for the constant \(c=d-\epsilon\) and \(C=d+\epsilon\), there exists an \(n_{\epsilon}\) such that

\[c|g(n)|\leq|f(n)|\leq C|g(n)|\]

holds for all \(n\geq n_{\epsilon}\), which proves that \(f\in\Theta(g)\).

From this, we also give the corollary as followed:

If two functions \(f\) and \(g\) are asymptotically equal, \(f\sim g\), then they have the same order of growth, that is, \(f\asymp g\).

Little \(o\) Notation

We have the following definition:

A function \(g(n)\in o(f(n))\) if for every constant \(c> 0\), there exists a constant \(n_{0}\geq 0\) such that

\[g(n)\leq cf(n)\quad \forall n\geq n_{0}\]

This definition is used to show that \(g\) grows much slower than \(f\). We also have that

\[f(n)\in o(g(n))\iff \begin{cases} f(n)\in O(g(n)) \\ f(n) \not\in \Theta(g(n)) \end{cases}\]

By the definition of little \(o\) notation, we can also see it has a dampening effect - for \(O(f(n))\), you have that there exists a \(c>0\), here, we have for all \(c>0\). An equivalent formulation when \(f(n)\) is non-zero is given as:

\[\lim_{ n \to \infty } \frac{g(n)}{f(n)} = 0\]

Little \(\omega\) Notation

A function \(g(n)\in \omega(f(n))\) if for every constant \(c>0\), there exists constant \(n_{0}\geq 0\) such that

\[g(n)\geq cf(n)\quad\forall n \geq n_{0}\]

This is written as \(g(n)\in \omega(f(n))\), and is used to indicate the larger lower bound of \(\Omega(f(n))\) - \(f\) grows much faster than \(g\).

Reference

- CS310 - Algorithm - Lecture 5

- Lecture Notes on computational complexities (Luca Trevisan)

- Wikipedia page on Asymptotic Notation - It seems, for the present, little \(o\) notation do not have many usages.